Visual Eyes – Advanced IoT Strategies with Smart Cameras & Edge AI

What makes the combination of cameras and edge AI so compelling? Why it’s happening now? and how to start planning your camera and Edge AI project

Boosting your IoT strategy with smart cameras and Edge AI

An engineer prepares to enter the red zone in an oil and gas refinery. A leak of hydrogen sulphide gas in this zone could be fatal. The security camera at the entrance checks that the engineer is wearing emergency breathing apparatus before releasing the door lock.

This is camera and Edge AI in action.

Without built-in intelligence, hundreds of cameras in the refinery would stream video to banks of monitors for an operator to check. Or they could stream video to the cloud for analysis, creating a significant and costly load on bandwidth, network infrastructure and cloud compute. Neither option provides the reliable, real-time response required for safe and efficient operations.

But, together the Internet of Things (IoT) and Edge AI are transforming the commercial and industrial landscape. According to Tractica, AI edge device shipments are expected to increase from 161.4 million units in 2018 to 2.6 billion units by 2025.

What do we mean by the ‘Edge’ for AI?

The edge is the physical location where things and people connect with the networked, digital world. An IoT device or sensor is also called an endpoint.

Gartner defines edge computing as ‘part of a distributed computing topology where information processing is located close to the edge, where things and people produce or consume that information’.

The edge consists of many layers at which processing and AI can be deployed. Endpoints and network resources (routers, gateways, servers and micro datacentres) are all candidates for edge computing.

What’s driving AI to the edge?

Machine learning – and deep learning in particular – requires intensive compute power. Running ML in remote data centres or the cloud has – until recently – been the only option.

But the proliferation of IoT devices and the need for real-time responses have created a number of challenges for this centralized model.

Massive data

Billions of connected IoT devices create a massive amount of data, even if each one only generates a small amount. It’s estimated that security cameras create about 2,500 petabytes of data globally each day.

Sending this data to the cloud for storage and processing is often impractical, inefficient and expensive – especially if much of the data is irrelevant (for example, security footage when nothing is happening).

Edge AI can dramatically reduce the amount of data sent to the cloud, significantly reducing operating costs and the total cost of ownership of the devices.

Latency

Many use cases need real-time responses. Examples include:

- Mission-critical applications, such as autonomous vehicles

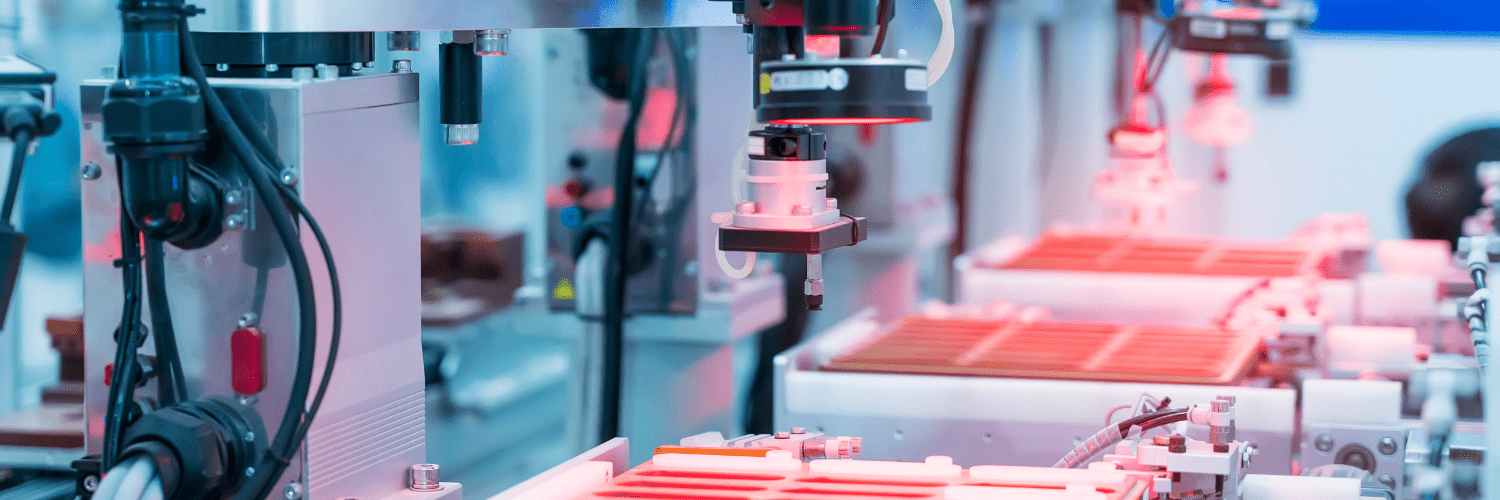

- Quality control systems that check for faults on production lines

- Safety-critical systems, such as monitoring pipe integrity in a refinery

Waiting for a response from cloud analytics can introduce unacceptable delays. Edge AI eliminates the latency introduced by a round trip to the cloud.

Privacy

Strict regulations govern the use and transfer of personal data (including biometric and image data). This creates complexity, particularly if data crosses country boundaries. Customers want companies to treat their data correctly, and the publicity from privacy breaches can damage brands.

With edge AI, personal data is kept close to the source, reducing privacy breaches and simplifying compliance with regulations.

Keeping personal data private (and not sending it to the cloud) creates more opportunities for personalised learning applications.

Security

Connecting industrial machines or legacy equipment to the internet can introduce vulnerabilities to hacking. Processing the data on or near the devices reduces these risks.

Limiting the data sent to the cloud can reduce the chance of cybersecurity attacks on data in transit. Intelligent edge nodes can determine the appropriate security mechanisms to use.

However, adding processing nodes increases the attack surfaces – all of which must be protected.

Partitioning – what should be done at the edge?

Cloud and edge AI can work in partnership. The cloud is best for handling big data, training neural network models, orchestration, and running inferences that are complex, depend on off-device data or can be done offline. Edge AI is best for inference on smaller, self-contained models where autonomy or a fast response is required.

For optimum performance, you may choose to deploy ML on several resources: cloud, edge nodes and endpoints. Unless edge devices need to be completely autonomous, a hybrid model may be the best solution.

To determine where to deploy ML, you need to understand your requirements for autonomy and latency. You also need to identify the data that must be transferred to the cloud for analysis, and the data that can be processed with the available compute power on edge devices.

Your project requirements determine how you should partition the AI in a way that both meets the constraints of the edge devices and achieves the objectives of your project.

Identifying what matters for your Edge AI project

You need to understand how your requirements fit with the constraints of edge AI. For example:

- Accuracy – models need to be optimized to run in constrained environments, which can result in reduced accuracy but faster predictions. You need to understand what trade-offs are acceptable.

- Response times – the speed with which you need to serve predictions determines how close to the device you need the ML.

- Autonomy – you need to determine if the device can – or must – work independently of a central system.

- Data processing – ML models in the cloud often process data in batches, while ML at the edge processes data in real-time. This can influence the design and architecture of your models.

- Training – models can learn and improve from the data they process. For example, initially a health monitoring application may detect if a person is wearing a mask. Later, it may learn how to check that the mask is being worn correctly. You need to collect data for continuous improvement.

- Model updates – the likely frequency and complexity of updating distributed models can impact the choice of architecture and tools.

- Auditing and explainability – regulations on auditing and explaining decisions made by your AI can impact the information you need to store about ML models and the decisions they make.

- Privacy – collecting personal image data can create privacy concerns. You need to identify how you will address these and comply with regulations.

- Security – you need to identify security requirements (for example, encryption, identity and access management) for all edge AI devices.

What can cameras and Edge AI do?

Many applications for cameras and Edge AI already exist, and many more will become viable as technologies mature. Sensor fusion (combining data from multiple sensors of the same or different types) enables further innovation and development opportunities by providing a more accurate world model.

Smart vehicles

- Fully autonomous vehicles are in an AI category of their own.

- But there are other ways smart cameras can improve safety in vehicles.

- For example, cameras can analyse road conditions and driver behaviour (such as falling asleep) to alert the driver and reduce accident rates.

- Cameras on vehicles can also assist with safe manoeuvring and parking.

Smart homes and devices

- Facial recognition can support home security, for example, allowing access to family members and warning of intruders.

- Mood recognition enables smart devices to tailor services, such as creating mood-appropriate playlists.

Workplace safety and security

- Facial recognition can enable security measures, such as controlling access to buildings or restricted zones.

- Cameras – in conjunction with other sensors – can identify trip hazards, slippery surfaces and leaks, and track the movements of cranes and other industrial vehicles.

- In a pandemic, cameras can check mask-wearing, social distancing and even hand washing practices. Thermal cameras can highlight raised temperatures, and audio from cameras can detect bouts of coughing.

- Cameras in smoke detectors can identify the location and severity of a fire, enabling targeted use of sprinklers and providing detailed information to emergency responders.

A vision for the future

Cameras and edge AI can deliver real value to companies that see its potential and can address the challenges it brings. Steadily-maturing Edge AI and camera technologies, sensor fusion and 5G are driving the opportunities for successful and innovative use cases.

But achieving ROI isn’t easy. From proof of concept and prototype to development, deployment and operations, there are many pitfalls for the unwary.

Is Edge for you? Get in touch with us

Consult Red is a technology consulting company helping clients deliver connected devices and systems, supporting them through the entire development journey.

Contact us to talk about achieving value with your camera and Edge AI project.